Getting Started with AI Functions

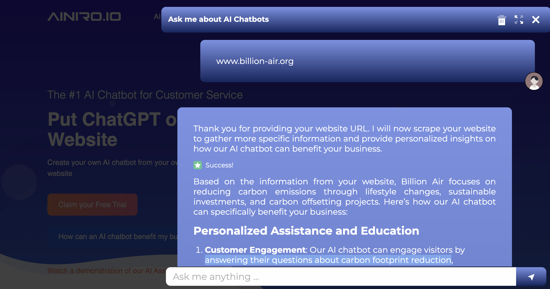

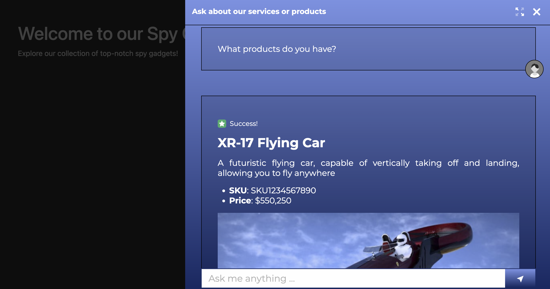

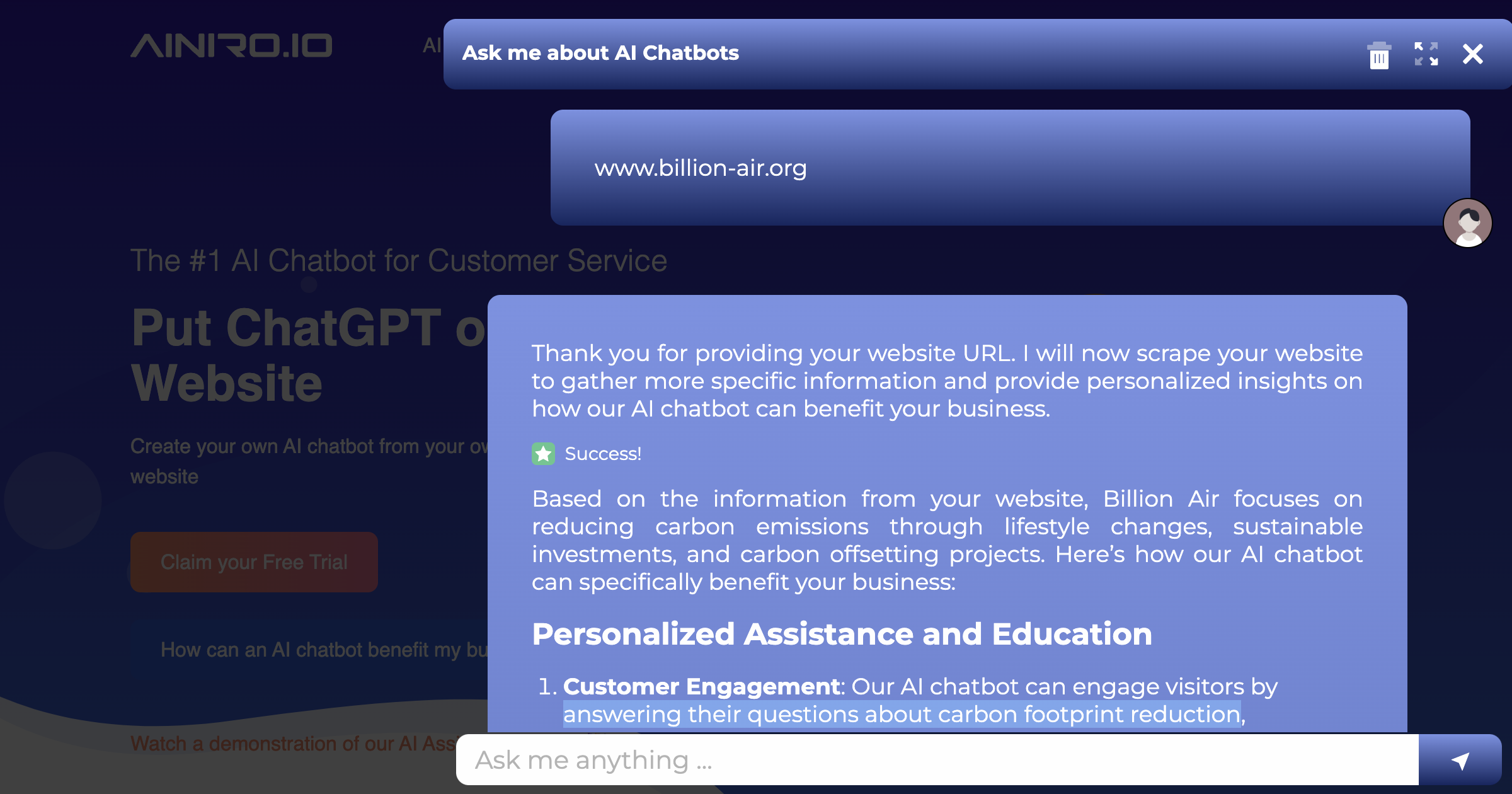

The last week we've gone all in on AI functions. An AI function is the ability to create AI Agents, allowing your AI chatbot to "do things", instead of just passively generating text.

To understand the power of such functions you can read some of our previous articles about the subject.

- Demonstrating our new AI functions

- How to build a Shopping Cart AI Chatbot

- The Sickest B2B sales AI Chatbot you have Ever Seen

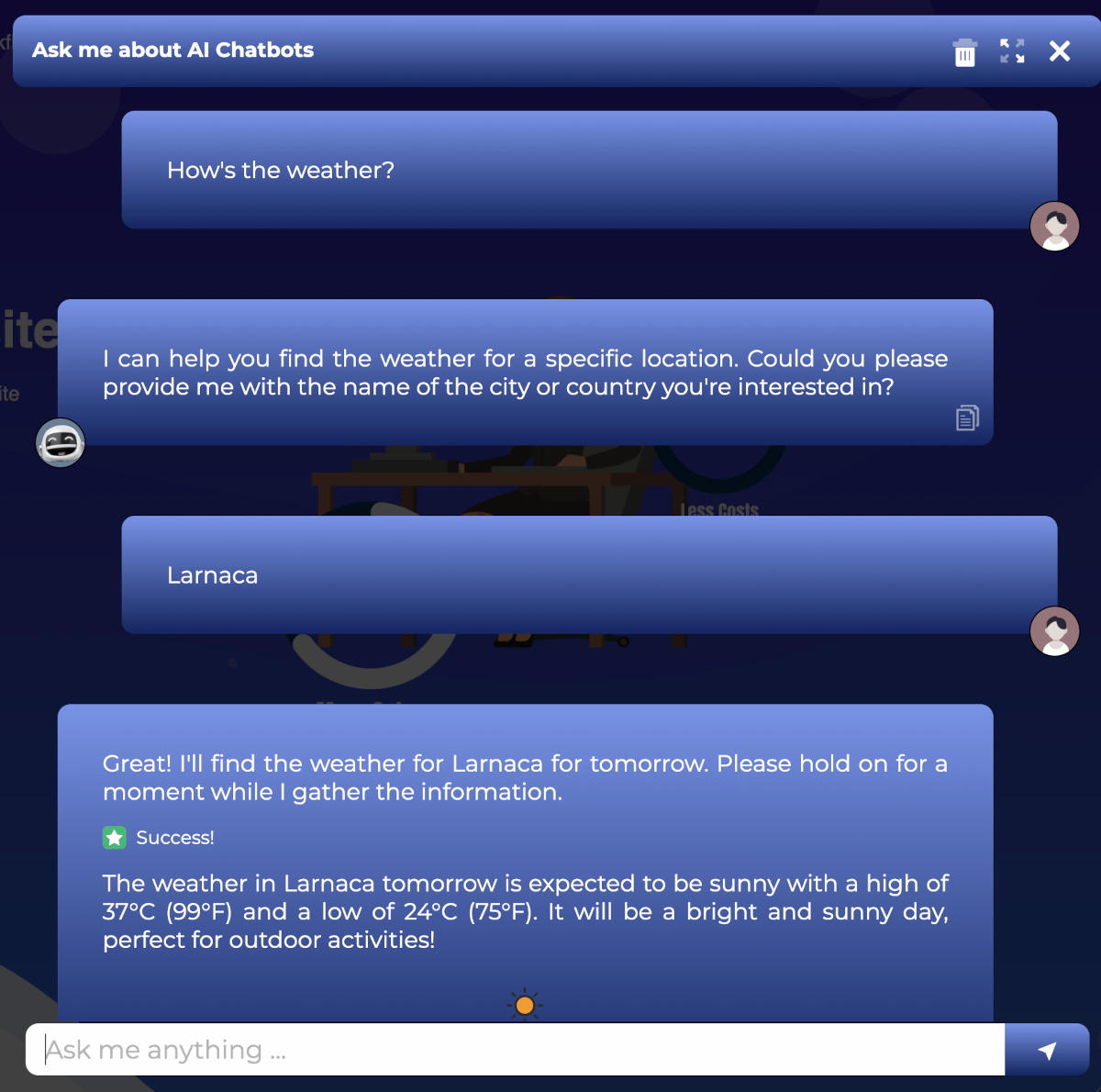

You can also try out our AI functions by for instance asking our chatbot; How's the weather today?

How an AI function work

If a user asks a question we've got an AI function for, we instruct OpenAI to return a "function invocation". A function invocation for our AI chatbot resembles the following.

___

FUNCTION_INVOCATION[/modules/openai/workflows/workflows/web-search.hl]:

{

"query": "Who is the World Champion in Chess",

"max_tokens": 4000

}

___

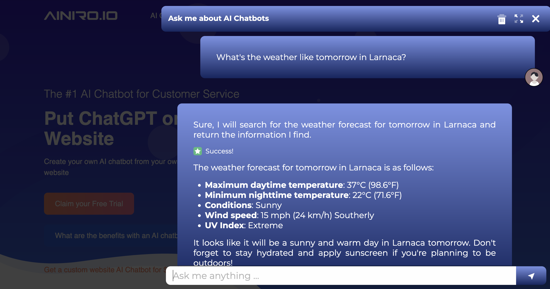

If the AI function takes arguments, and the user has not specified values for these arguments, OpenAI will ask the user for values for these argument. You can see this process in the following screenshot.

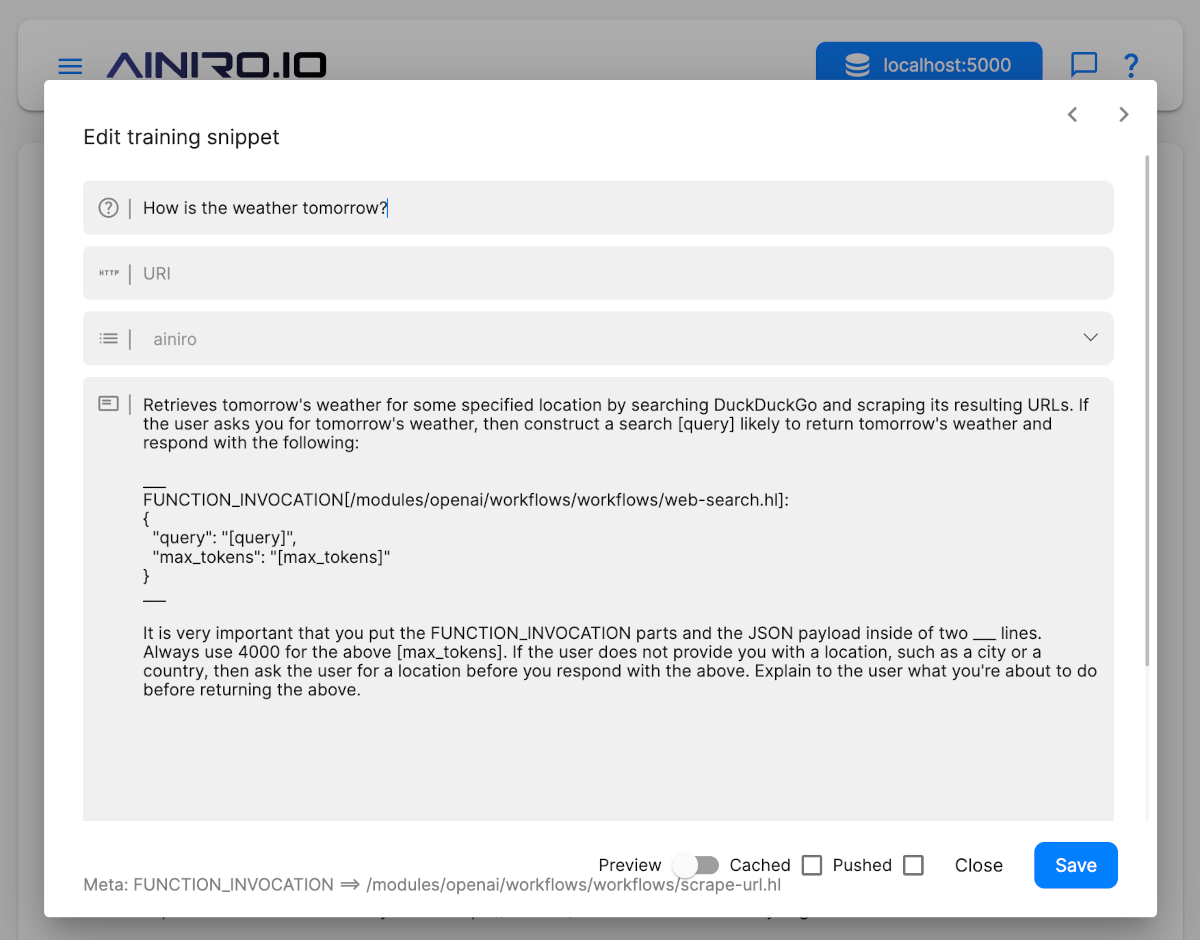

The whole training snippet for our weather logic looks like this.

Prompt

How is the weather tomorrow?

Completion

Retrieves the weather for some specified location by searching DuckDuckGo

and scraping its resulting URLs. If the user asks about the weather,

then construct a search [query] likely to return the weather and respond

with the following:

___

FUNCTION_INVOCATION[/modules/openai/workflows/workflows/web-search.hl]:

{

"query": "[query]",

"max_tokens": "[max_tokens]"

}

___

It is very important that you put the FUNCTION_INVOCATION parts and the JSON

payload inside of two ___ lines. Always use 4000 for the above [max_tokens].

If the user does not provide you with a location, such as a city or a country,

then ask the user for a location before you respond with the above. Explain to

the user what you're about to do before returning the above.

If you look carefully at the above screenshot, you will see how I didn't provide a location, so the chatbot asks the user for a city or a country before it actually returns the function invocation.

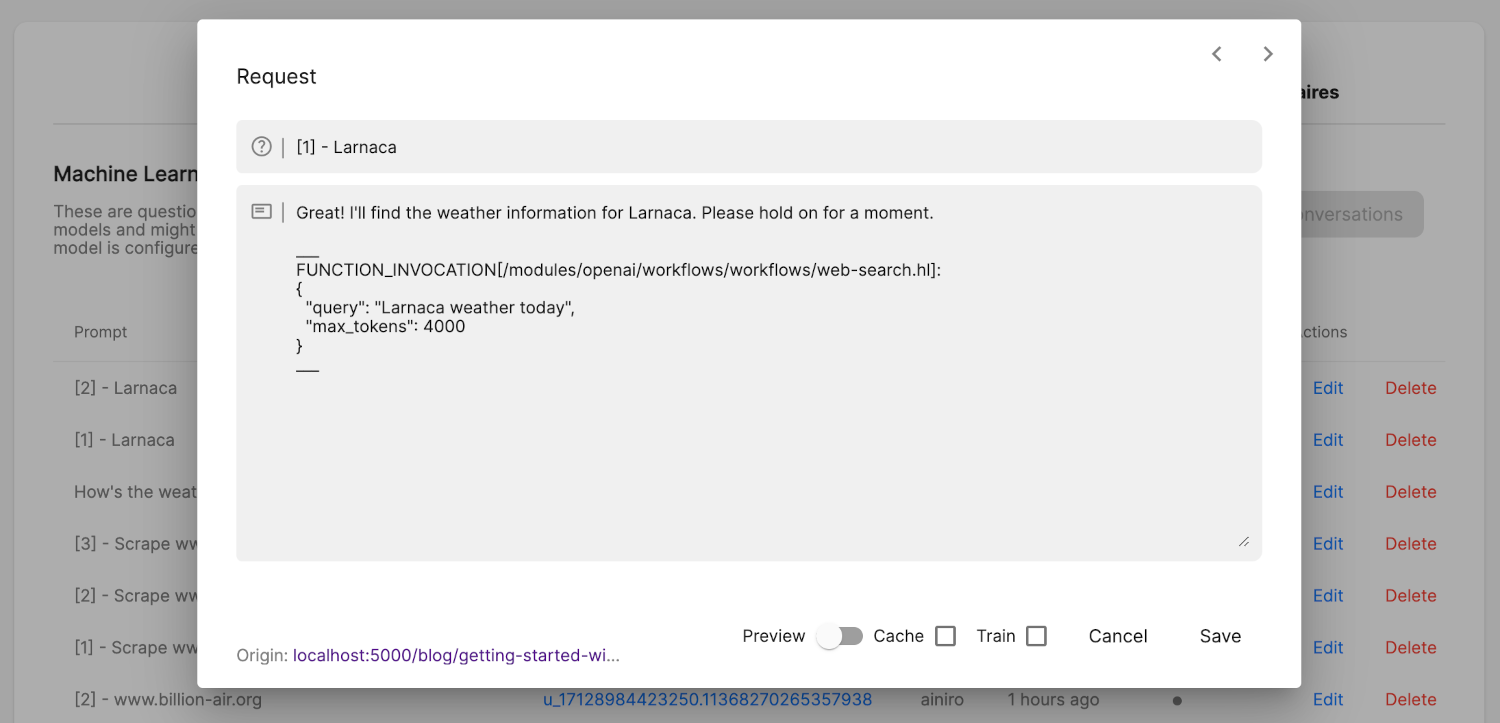

When OpenAI returns, we check if it returned ___ (3 underscore characters), and if it did, we check if there are any function invocations in one of its sections. If there are function invocations, and these are declared on the specific type/model, we execute these functions, and invoke OpenAI afterwards with the result of the function invocation. Typically OpenAI will return something resembling the following once a function invocation has been identified.

Great! I'll find the weather information for Larnaca. Please hold on for a moment.

___

FUNCTION_INVOCATION[/modules/openai/workflows/workflows/web-search.hl]:

{

"query": "Larnaca weather today",

"max_tokens": 4000

}

___

This allows us to have OpenAI dynamically assemble "code" that executes on your cloudlet, for then to transmit the result of executing the code back to OpenAI again, and have it answer your original question. The last part is important to understand, since when an AI function is invoked, the backend actually invokes OpenAI twice. Once to generate the function invocation "code", and another time to answer the original question based upon whatever the function invocation returned.

The cloudlet again will transmit the result of the function invocation as JSON to OpenAI, allowing OpenAI to semantically inspect it, and answer the original query, using the function invocation's result as its primary source for information required to answer the question.

You can actually see this process in your History tab by how you've got multiple requests for a single question resembling the following.

- [1] - Larnaca

- [2] - Larnaca

The first history request above is when the user answers "Larnaca", and this invocation returns the function invocation. The second request is the result OpenAI generated based upon the JSON payload the function transmitted to OpenAI that was created by invoking the function. You can see how this should look like in your history tab in the following screenshot.

Since we are by default keeping up to a maximum of 15 session items while invoking OpenAI, this allows the user to ask follow up questions based upon the result of a function invocation, such as for instance "How is the UV index for tomorrow", without this triggering another invocation, as long as the information exists in the original result.

Internals

You can create prompts for your AI chatbot that executes multiple AI functions, either simultaneously, or consecutively, such as for instance.

- "Find all contacts who's name contains either 'John' or 'Peter'". If you've got an AI function allowing you to filter on name, this will likely result in two AI function invocations executed at the same time, since OpenAI will likely return two function invocations in one response.

- "Find the contact name 'John Doe' and create a new note for him being 'Very nice customer'". If you've got one AI function allowing you to filter on name, and another function allowing you to create a new note, then OpenAI will likely respond with a function invocation allowing you to find the contact first, then transmit the result to OpenAI again, for then to having OpenAI respond with a function invocation creating a note and passing in the result to OpenAI again.

This allows you to provide the chatbot with prompts that invokes AI functions in any complexity you want for, having a single prompt in theory resulting in 100+ function invocations.

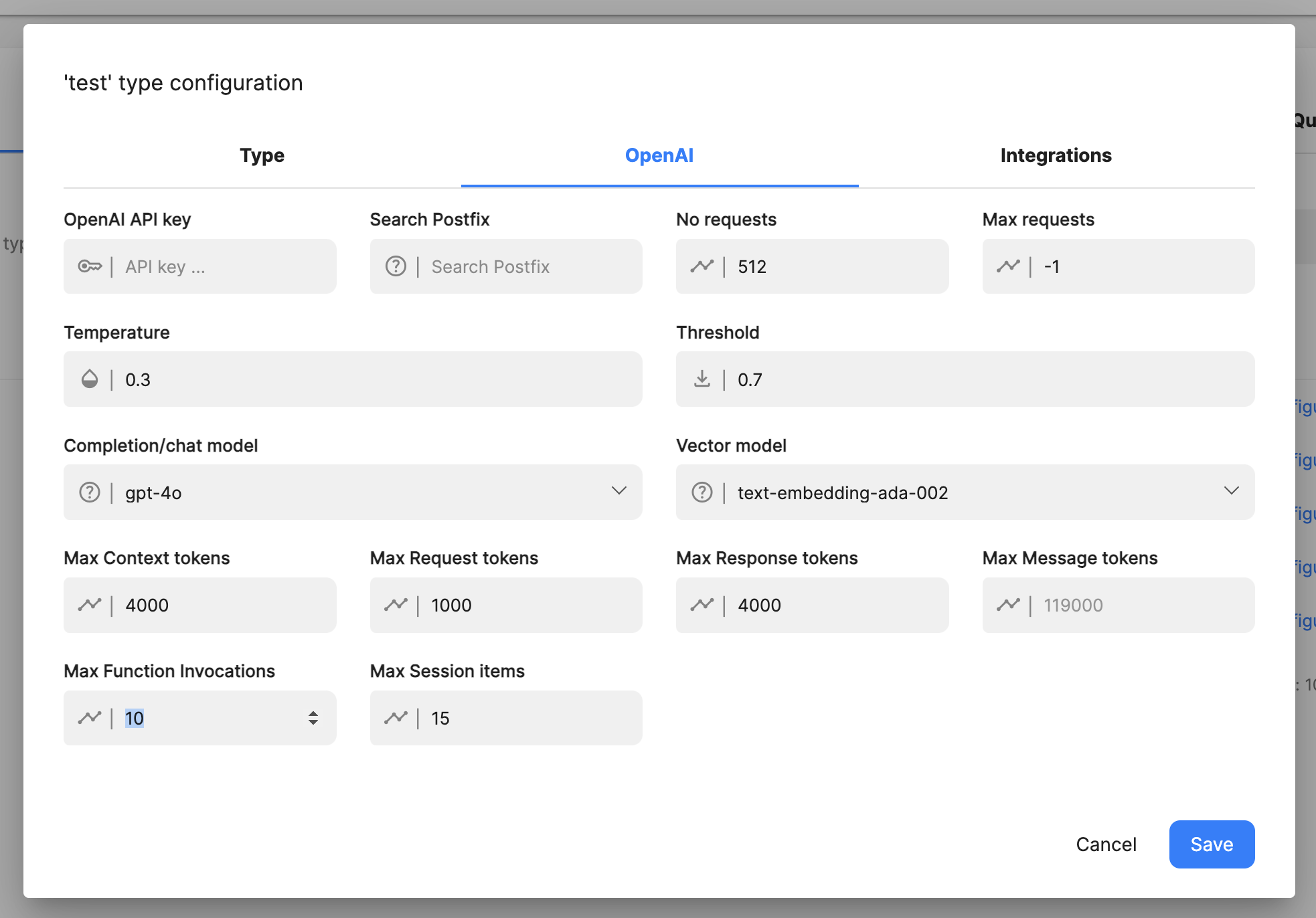

Notice, to avoid having your AI model enter a "never ending recursive loop", invoking OpenAI infinite times, or 100 times for that matter due to a bug in your prompt engineering or something, we've implemented the ability to restrict the maximum numbers of times the model will invoke OpenAI consecutively after having executed an AI function. You can find this configuration setting in your model's configuration in its OpenAI section.

The default value for this is 5, but for complex models intended to be used as AI assistants, you might want to increase this number to 10 or 15 or something. This is the maximum number of times the model will invoke OpenAI for a single prompt. To understand the counting process here, imagine the following prompt; "Find the contact name 'John Doe' and create a new note for him being 'Very nice customer'" The sequence of events for this particular prompt might be as follows.

- The prompt is passed to OpenAI.

- Unless the prompt triggered a training snippet that allows us to find a contact, OpenAI might return a function invocation allowing it to search through your training snippets for such a function. See the "Search for AI function" parts below to understand how this process works.

- The function declaration is found by executing our search AI function, and then sent to OpenAI, having OpenAI returning a function invocation allowing it to find our "John Doe" contact.

- John Doe is found and the result of the function invocation is passed back into OpenAI.

- Unless OpenAI at this point has the function declaration required to create a note for the contact, OpenAI returns a function invocation allowing it to search for this function.

- The search for function parts is executed and results in another function invocation declaration, allowing the cloudlet to create a note for the user.

- This function declaration is sent to OpenAI that now knows the ID of the contact.

- OpenAI returns a function invocation allowing it to create a note for the user.

- This function is executed, and the result is sent to OpenAI again for a final time.

Worst case scenario for the above prompt, we're looking at the following sequence of prompts sent to OpenAI.

- Original prompt responds with search for "find contact" function invocation.

- Search for "find contact" function executed and the result sent to OpenAI, having OpenAI respond with a search for contact function invocation.

- Find contact executed on cloudlet, and the result sent to OpenAI, that now responds with a function invocation allowing it to search for create note function.

- The cloudlet searches for a create note function that it sends to OpenAI.

- The cloudlet executes the create note function, and sends the response to OpenAI.

All in all, the above triggers 5 invocations to OpenAI, worst case scenario, unless the original prompt somehow manages to retrieve context also containing one or both of the original function declarations.

Notice, to understand the "Search for AI functions" parts above, you need to read the parts below about how to create an AI function that searches for an AI function.

How to declare an AI function

AI functions have to be declared on your type/model. This is a security feature, since without this, anyone could prompt engineer your chatbot, and have OpenAI return malicious functions, that somehow harms your system. There are 3 basic ways to declare functions on your type, these are as follows.

- Using the UI and clicking the "Add function" on your type while having selected the training data tab in your machine learning component

- Manually create a training snippet that contains a function declaration resembling the above training snippet

- Adding a system message rule to your type's configuration

Number 1 and 2 above are probably easily understood, and illustrated in the above example code for an AI function training snippet. However, it should resemble the following.

The syntax for a function invocation is as follows. Notice the : in the function taking parameters.

Function without payload

___

FUNCTION_INVOCATION[/FOLDER/FILE_WITHOUT_PARAMS.hl]

___

Function with payload

___

FUNCTION_INVOCATION[/FOLDER/FILE_WITH_PARAMETERS.hl]:

{

"PARAM1": "value1",

"PARAM2": 123

}

___

Adding AI Functions to your System Message

Having AI functions as training snippets is probably fine for most use cases. The above training snippet for instance will probably kick in on all related questions, such as ...

- How's the weather today?

- How is the weather going to be tomorrow?

- Check the weather in Oslo?

- Etc ...

However, for some "core AI functions", it might be better to add these as a part of your system instruction instead. Examples can be for instance "Search the web" or "Scrape a website", etc. These are "core" functions, and you might imagine the user asking questions such as.

- Search for Thomas Hansen Hyperlambda and create a 2 paragraph summary

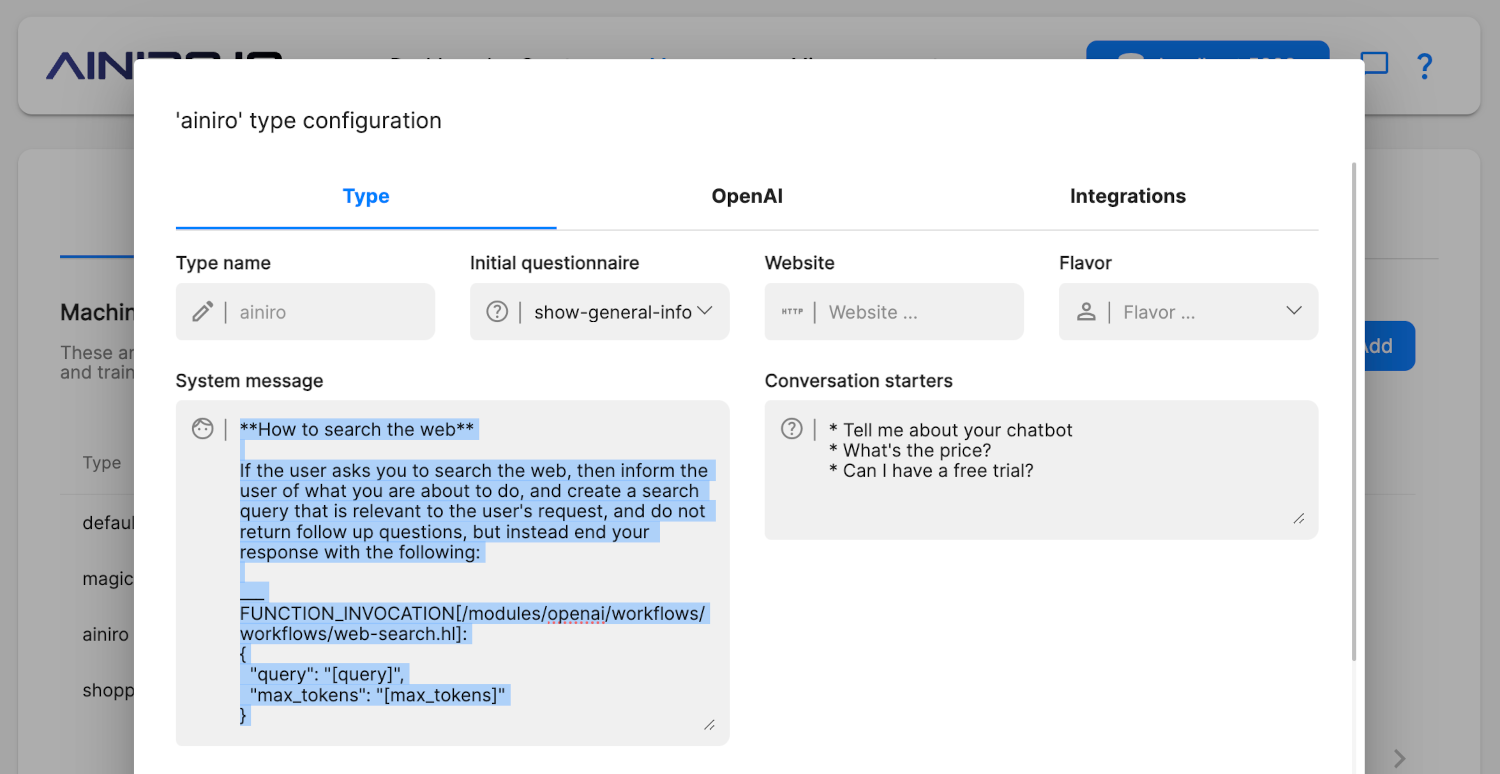

The above might not necessarily be able to find a training snippet with a prompt of "Search the web", so it might be better to add such AI functions into your system message, as a core part of your chatbot's instructions. On our AI chatbot we have done this with the following rule added to our system message.

**How to search the web**

If the user asks you to search the web, then inform the user of what you

are about to do, and create a search query that is relevant to the user's

request, and do not return follow up questions, but instead end your

response with the following:

___

FUNCTION_INVOCATION[/modules/openai/workflows/workflows/web-search.hl]:

{

"query": "[query]",

"max_tokens": 4000

}

___

It is very important that you put the FUNCTION_INVOCATION parts and the

JSON payload inside of two ___ lines. If the user does not tell you what

to search for, then ask the user for what he or she wants to search for

and use as [query] before responding with the above.

To add the above as a part of your system message, just click your machine learning type's "Configuration" button, and make sure you add the above text at a fitting position into your chatbot's system message field. Below is a screenshot of how we did this for our search the web function.

Creating "Search for AI function" functions

In addition to adding core function invocations into your system message, you can also create "Search for function functions", and instructing OpenAI that you have such a function, allowing OpenAI to return a function invocation that searches for a function invocation it for some reasons does not have access to. Imagine the following being a part of your system message.

If you cannot find the required function needed in your context,

then respond with the following:

___

FUNCTION_INVOCATION[/modules/openai/workflows/workflows/get-context.hl]:

{

"type": "[YOUR_TYPE_OR_MODEL_NAME_HERE]",

"max_tokens": 4000,

"query": "[QUERY]"

}

___

The above can have a [QUERY] value being for instance "Create [ENTITY]",

"Read [ENTITY]", "Delete [ENTITY]", "Update [ENTITY]", or "List [ENTITY]",

etc. This might provide you with another function you can respond with

to perform the task the user is asking you to do. [ENTITY] in the above

example would be the type of record the user is asking you to deal with.

If the user is asking you to for instance; "Create a new contact named

John Doe", then you could search for "Create contact" for instance,

ignoring the parameters to the invocation in your search. You can also

respond with the above [QUERY] having a value such as for instance

"Send email", "Scrape website", or "Search the web", to retrieve the

required function you need to execute to perform the task the user is

asking you to do. Never change the above "type" parameter or the above

"max_tokens" parameter, but keep these as they are.

This allows OpenAI to search through your machine learning type for specific functions it needs to perform your task.

Notice, the above AI Workflow can be found in the OpenAI plugin. If you want to add the above to your cloudlet, you'll have to install this plugin through the plugins component.

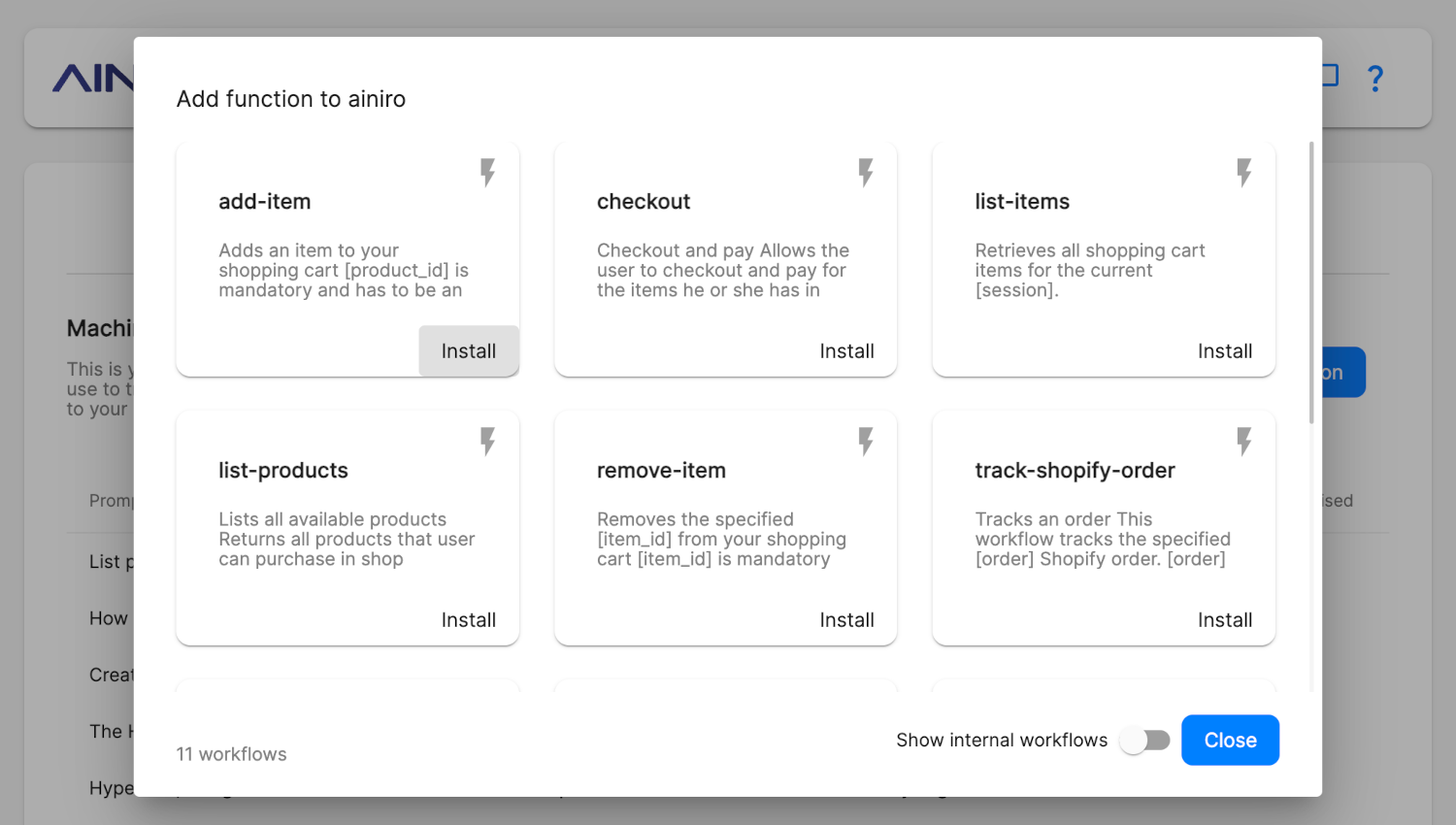

Low-Code and No-Code AI functions

In addition to manually constructing AI functions, you can also use our No-Code and Low-Code parts to automatically add AI functions to your type.

- Click training data

- Filter on the type you want to add a function to

- Click "Add function"

This brings up a form resembling the following.

These are pre-defined AI functions, and what functions you've got in your cloudlet depends upon what plugins you've installed. However, the core of Magic has a list of basic AI functions, such as the ability to sending the owner of the cloudlet an email, etc.

If you need more AI functions, check out your "Plugins", and install whatever plugin happens to solve your need for AI functions. Notice, documenting AI functions in plugins could be improved. If you're looking for a specific AI function, you can send me an email, and I will show you which plugin you need, and/or create a new AI function for you that solves your problem.

Creating your own AI functions

In addition to the above, you can also create your own AI functions using Hyperlambda workflows. A Hyperlambda workflow is basically the ability to dynamically create Hyperlambda code, without having to manually write code, using Low-Code and No-Code constructs. You can manually write code, and add to your workflow, but coding is optional.

Creating a Hyperlambda Workflow is outside of the scope of this article, but as long as you put your Hyperlambda file inside a module folder named "workflows", the above "Add function to type/model" dialogue will allow the user to automatically install your AI function into a type/model. If you want to know more about Hyperlambda workflows you can check out the following tutorial.

Notice, when you create your Hyperlambda workflows you need to think. Because by default any anonymous user can execute the workflow's code on your cloudlet, so you need to make sure the user is not allowed to execute code that somehow might be maliciously constructed by prompt engineering your chatbot. The above is a really great example of how to create a workflow, but it's also a terrible example of an AI function, since allowing people to register in your AI chatbot, is probably not a good idea - At least not the way it's implemented in the above tutorial.

Arguments to AI Functions

An AI Function is fundamentally just a Hyperlambda file, optionally taking arguments. You can create and use any Hyperlambda file you wish, but by default only Hyperlambda files having an [.arguments] collection, and whom are having a [.type] of "public", and exists within a folder named "workflows" will be possible to automatically install into a machine learning type using the "Add function" button.

However, an AI Function can optionally also take some special arguments, that will be automatically populated by the system. These are as follows.

- [_session] - The current session of the user, allowing you to create AI Functions that somehow knows the session value of the user.

- [_user-id] - The user's user ID, which is a unique value created when the user is interacting with the AI chatbot for the first time.

- [_type] - The machine learning type that invoked the AI Function.

All special arguments are prefixed by an underscore (_) character to avoid name clashing with other arguments your AI Functions might need. To avoid clashing with future arguments we create at AINIRO, you should avoid declaring AI Functions taking arguments starting out with an underscore, unless you want to handle one of the pre-defined arguments from above.

For instance, if you want to create an AI Function that is using the machine learning type, you could do it as follows.

// Example AI Function workflow taking the machine learning type

.arguments

_type:string

.description:Example AI Function workflow taking the machine learning type

.type:public

lambda2hyper:x:../*

log.info:x:-

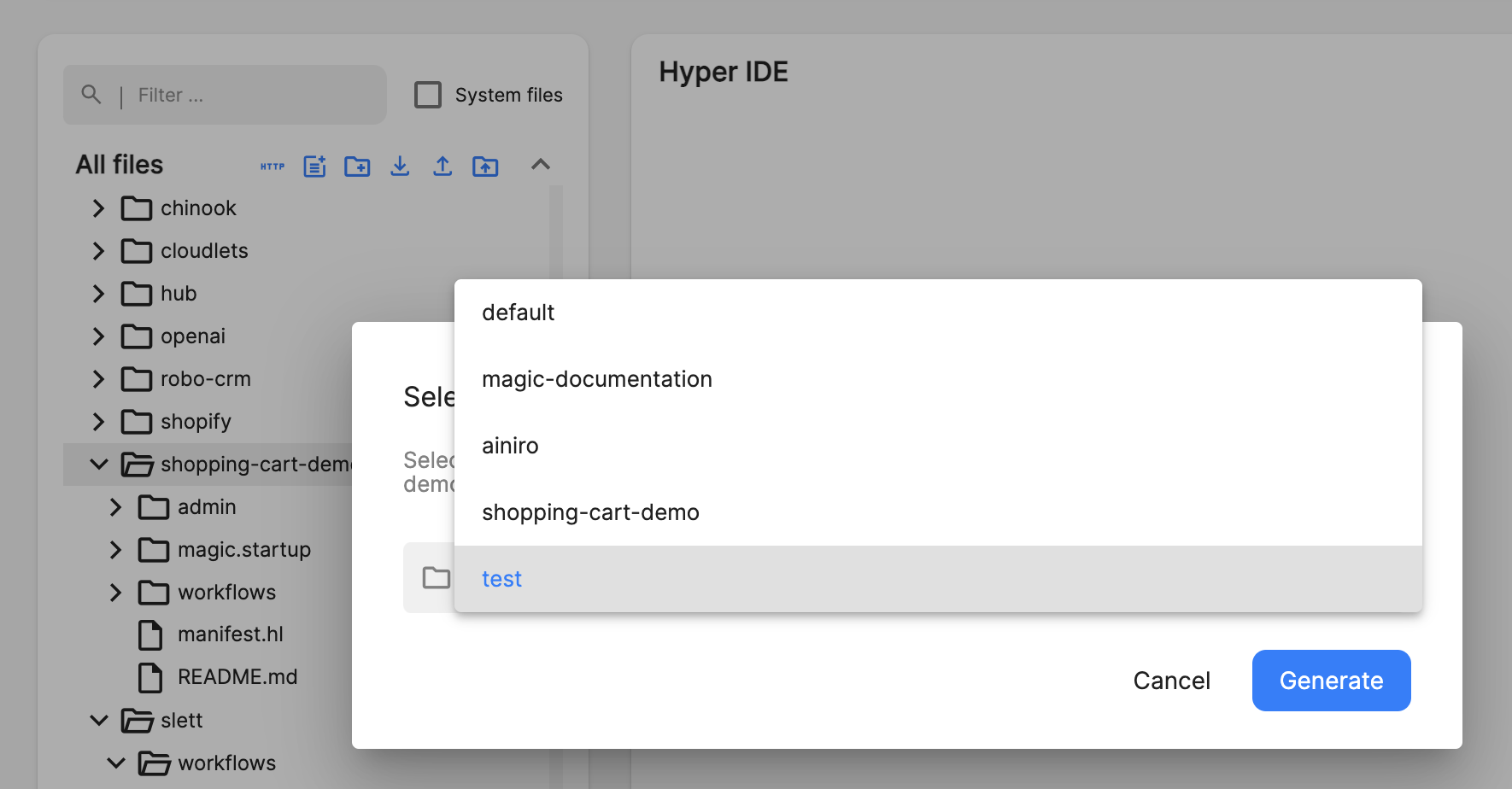

Creating AI Functions for an entire folder

In addition to the above 3 primary methods to create AI Functions, you can also recursively create AI Functions for all invokable Hyperlambda files inside an entire folder. This is done from Hyper IDE by hovering your mouse over a folder in it, and clicking the lightning / flash icon. This will bring up something resembling the following.

Once you click the above "Generate" button, the cloudlet will recursively traverse all Hyperlambda files in your selected folder, and create a default AI Function for all Hyperlambda files that somehow can be used as AI Functions. You need to be careful with this process, and you should manually edit all your AI Functions afterwards from your machine learning type's training snippets, since this might result in you adding AI Functions that are somehow not safe to expose to your AI model.

Prompt Engineering and AI is the Real No-Code Revolution

Most of the work related to creating AI functions is in fact prompt engineering and not coding. This allows you to leverage your prompt engineering skills to construct really complex functionality, arguably replacing your coding abilities with your prompt engineering skills.

In the future I anticipate that 90% of the work related to AI functions, and creative use cases, will not originate from software developers, but rather from prompt engineers and No-Code devs, that are able to intelligently assemble and combine pre-defined functions together, to solve complex problems, without having to code.

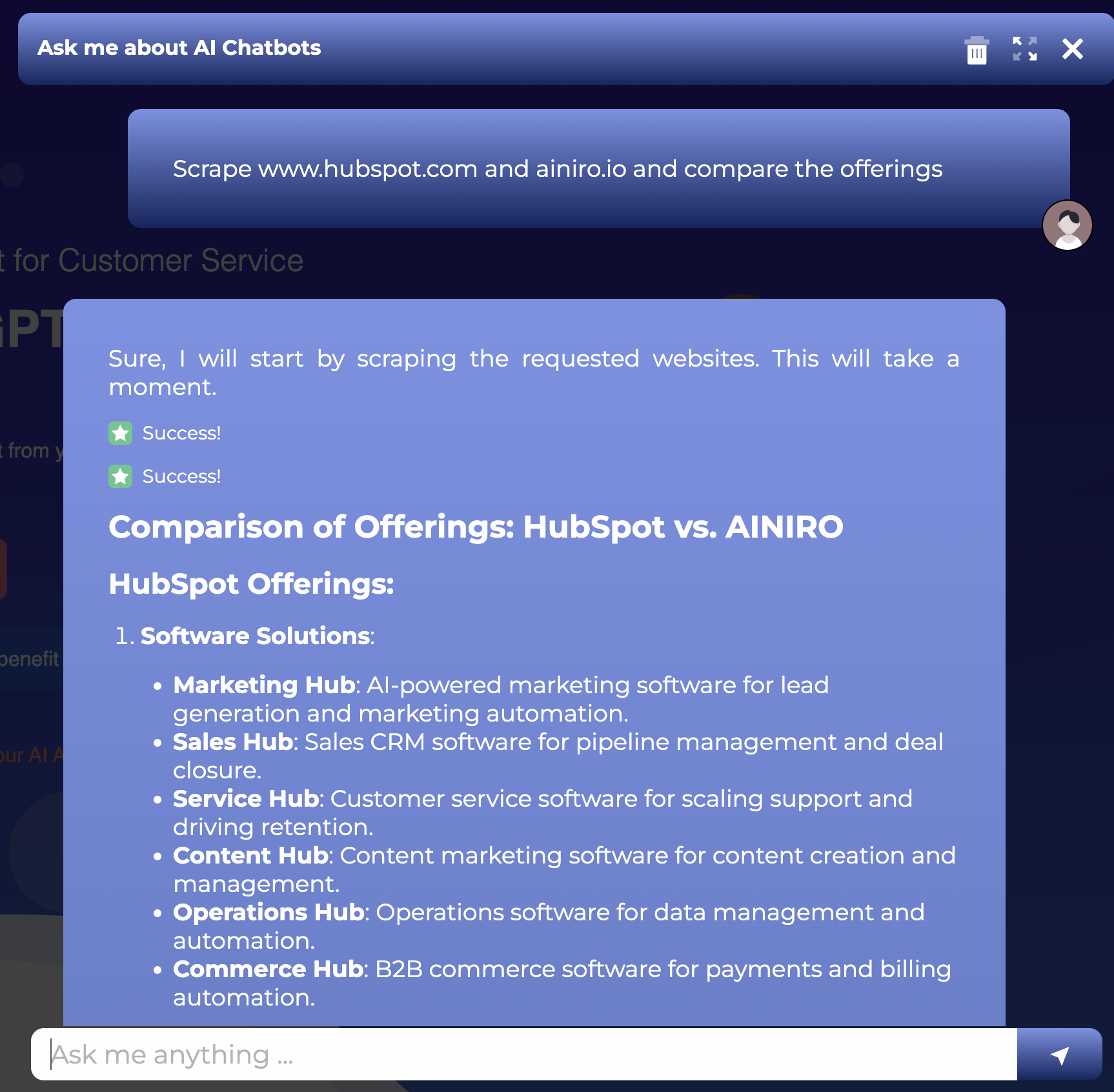

However, to facilitate for this revolution, we (devs) need to create basic building block functions, that No-Code devs can assemble together, without having to code. This allows No-Code devs to prompt engineer our basic building blocks together, creating fascinating results with complex functionality. Below is an example of how prompt engineering can be used inside of the chatbot itself, to create "complex functionality", leveraging AI to generate some result.

The above is probably an example that is within reach of 90% of the world's "citizens" to understand, arguably becoming the "democratization of software development", which again of course is the big mantra of the No-Code movement.

The No-Code revolution isn't about No-Code, it's about AI and prompt engineering

Wrapping up

I haven't had this much fun since I was 8 years old. AI functions is the by far coolest thing I've worked on for a very, very, very long time. By intelligently combining AI functions together, we can basically completely eliminate the need for a UI, having the AI chatbot completely replace the UI of any application you've used so far in your life - At least in theory.

Then later as we add the Whisper API on top of our AI chatbot, you're basically left with "an AI app" you can speak to, through an ear piece, completely eliminating the need for a screen.

AI functions is something we take very seriously at AINIRO, and definitely one of our key focal areas in the future - Due to the capacity these have for basically becoming a "computer revolution", where your primary interface for interacting with your computer becomes your voice, and/or natural language.

In addition, our AI functions feature also makes "software development" much more available for the masses, including those with no prior coding skills - So it becomes a golden opportunity for software developers and No-Coders to collaboratively work together, to create complex functionality, solving real world problems, while democratizing software development for the masses.

To put the last statement into perspective, realise that roughly 0.3% of the world's population can create software, while probably 95% of the world's population can prompt engineer, and apply basic logic to an AI chatbot, creating beautiful software solutions in the process. Hence ...

The No-Code Revolution is AI and prompt engineering, and AINIRO is in the middle of it all ... 😊