Magic Cloud has 60x the Performance of Lovable

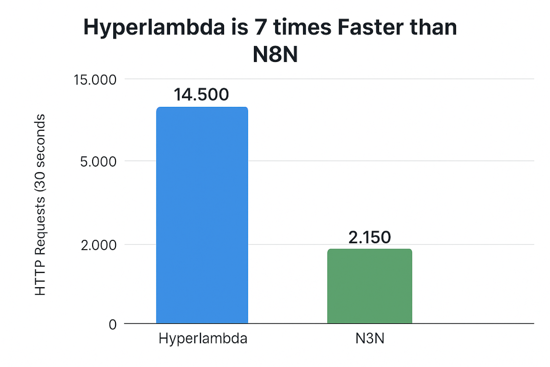

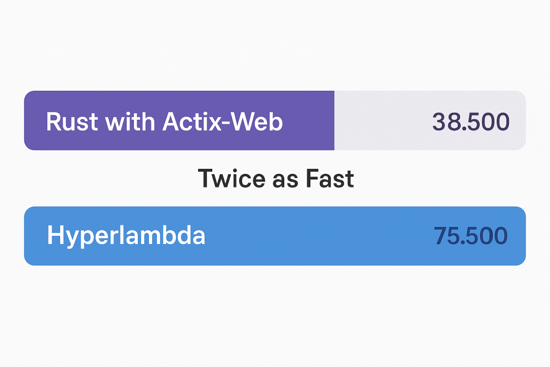

When you generate Hyperlambda code using Magic, we're first of all using GPT-4.1-mini. This model has a resource cost of about 10% of the larger models. In addition, Python and JS requires roughly 10x as many tokens for the equivalent Hyperlambda solution. However, the biggest difference comes in "execution". When the Hyperlambda code is generated, Magic executes the code in-process, while Lovable needs to deploy to a new virtual server. This last point probably makes the resource costs associated with generating code using Lovable about 1,000,000 more expensive - Literally!

In fact, I could probably execute the generated Hyperlambda function billions of times before it has the same costs as Lovable needs to "deploy" your code. Resulting in a TCO of literally 0.00001% compared to Lovable.

Evolving AI agents

This means we don't need to think of code as a "static thing", and we no longer need to separate the process of generating code, versus executing the same code. We can literally deliver natural language APIs that generates code "on the fly", required to solve the problem at hand, and returns the result of the execution in a couple of seconds of total time.

Basically, the last "tool" you will ever need for your AI agent ...

To understand what I mean here, consider the following screenshot. And notice how the total execution of that function was 9.8 seconds!

If I were to use Lovable for the above, I would need to "deploy it", and it would cost me almost $1. With Hyperlambda it's literally so inexpensive I don't even need to cache the function for future invocations, and I could probably execute the above function 1,000 times before I have spent $1. This facilitates for "evolving AI agents" that are literally "creating new tools" as it needs tools. Basically, an AI agent with "every single tool that is possible to build".

Need a new tool? Tell your AI agent to create one. Time2market? 3 seconds!

In the following video I'm going through the above performance differences, explaining them in details.

Notice, the same difference between Lovable and Magic Cloud would also be similar if you compare Hyperlambda to Cursor AI, Bolt44, Manus AI, etc, etc, etc ...